Semi-Autonomous Navigation of an Assistive Robot Using Low Throughput Interfaces

PhD thesis of Xavier Perrin (2009) ETH Zurich

In this thesis, a semi-autonomous navigation system for assistive robots is presented. Based on a novel human-robot interaction principle, it is particularly well suited to low throughput user interfaces. Indeed, the robotic system automatically stops at locations where a navigational decision has to be made and proposes a meaningful action to its user. He/she then either agrees or disagrees to the proposition; as a consequence the robot respectively executes the selected action or proposes an alter- native action. The proposition is chosen so as to minimize the required interactions. The main foreseen application of our navigation system is powered wheelchairs. However, in this thesis, we use a simple differential-drive robot for testing our con- cept. Furthermore, the system is not dependent of a particular robotic platform, thanks to the decoupling between the low-level platform control we consider as a black-box and the high-level intelligence we focus on.

When the robot is evolving in unknown environments, the analysis of its sensory data allows for a correct distinction between different topology types (e.g. crossings). At a topological change, the robot engages a dialog with the human user to select the next direction of travel. In known environments, a provided topolog- ical map already contains such places of interest where a human-robot interaction should take place, simplifying thus the robot reasoning. Furthermore, the main ad- vantage of being in a known environment is the opportunity to learn stereotypical user movements, i.e. the user habits. The presented system not only learns the raw trajectories, but also their dependence to contextual information such as the time of the day or specific events (e.g. a phone ring). The system is then able to propose better suited actions or even goal destinations, speeding up the action selection and reducing the required user involvement. As compared to a sustained engagement of the user in case of manual robot control, our system is based on few and simple dialogs of high-level abstraction, the highest level being attained when proposing goal destinations. This contributes to an overall low user engagement.

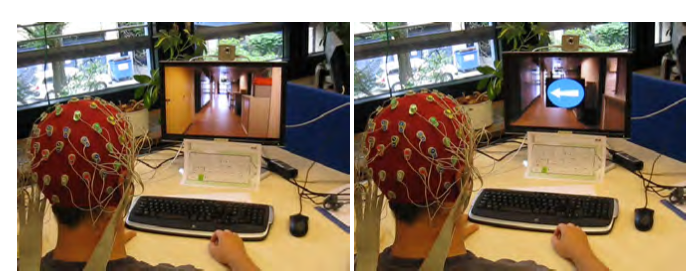

Although our system would be well adapted to commercially available low throughput interfaces such as single switches, voice recognition or sip and puff sys- tems, we explore the use a promising electroencephalography (EEG) based brain-computer interface (BCI) for steering the system. This choice is motivated by the wish to lower the user involvement in terms of interface use: relying on the brain as a new communication channel allows to free the user from steering the system by pressing a button, blowing in a tube, speaking out a command, or even looking at a target. For our work, we focus on the error-related potential, a specific sponta- neous brain signal indicating the user disagreement to a proposition. As compared to other BCI approaches, this signal associated to a high-level cognitive state per- fectly suits the abstraction level of our robot intelligence and contributes to a low user engagement. Of course, relying on a BCI brings some more challenges in terms of reliability. We thus have to take into account the uncertainty of such an interface and ensure that the BCI is tuned to its particular user.

In order to take into account the interface uncertainty, Bayesian techniques are applied to the selection of actions. Furthermore, the variable nature of the environ- ment, where unforeseen situations are often encountered, makes us rely on proba- bilistic approaches for the analysis of the sensory data. Finally, we use Bayesian reasoning to model the habits of the user, as they evolve day after day and as unex- pected events or movements may take place.

Two user studies are conducted in order to determine the best parameters for both proposing actions to the user and managing the human-robot dialog. Precise propositions are required in order to directly convey information from the machine to the human. The more salient they are, the better will be the EEG signals, and thus the human answer. Then, given the current situation encountered by the robot and the uncertainty in the user responses, an optimal dialog management ensures a minimal user involvement for the selection of an action.

In addition to the human-robot interaction studies, the system evolving in both known and unknown environments is thoroughly tested experimentally. We show that the topology classification, and thus the recognition of places of interests, per- form as expected and are able to correctly interpret unforeseen situations. In known environments, the habit learning and the possibility to propose goal destinations improve the performance of the system. Better and more specific propositions are made and, as a consequence, the user involvement in terms of number of interactions is lowered.

We then compare our developed system with existing ones and highlight the advantages of our proposed system: low user involvement, simplicity of the human- robot interaction, and rapidity of action or goal destination selection while fulfill- ing similar navigational tasks. It is thus a promising alternative for future assistive robots, among others intelligent wheelchairs. Finally, we mention some potential improvements of the system and sketch its possible adaptations to other engineering domains.